Introduction

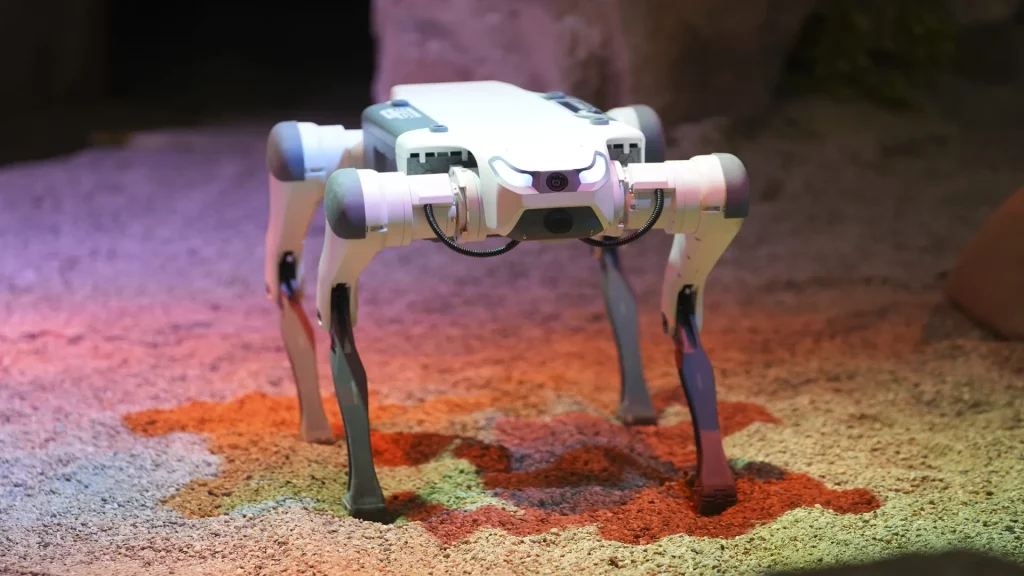

Quadruped robots—robots with four legs—have emerged as one of the most versatile robotic platforms due to their ability to navigate uneven terrain, climb obstacles, and maintain stability under dynamic conditions. Applications range from industrial inspection and search-and-rescue operations to service and exploration robots. Traditional control methods for quadruped locomotion, such as inverse kinematics, model predictive control (MPC), or central pattern generators (CPGs), often require extensive system modeling and are sensitive to unmodeled dynamics.

In recent years, deep reinforcement learning (DRL) has revolutionized the way legged robots achieve robust, adaptive, and agile locomotion. Leveraging frameworks such as PyTorch, researchers and engineers can train quadruped robots in simulated environments to learn complex behaviors that are transferable to real-world systems. This article provides a comprehensive exploration of PyTorch-based DRL control for quadruped robots, covering theoretical foundations, system architecture, practical implementation, simulation, training strategies, and deployment considerations.

1. Background and Motivation

1.1 Challenges in Quadruped Robot Control

Quadruped locomotion is inherently complex due to:

- High Degrees of Freedom (DoF): Each leg typically has 3–4 joints, resulting in 12–16 DoF for the entire robot.

- Dynamic Stability Requirements: The robot must maintain balance while performing gaits such as walking, trotting, or running.

- Environmental Uncertainty: Terrain irregularities, friction changes, and external disturbances complicate control.

- Nonlinear Dynamics: Legged robots are highly nonlinear and underactuated systems.

Traditional model-based approaches often struggle to generalize across environments and require precise system identification.

1.2 Advantages of Deep Reinforcement Learning

DRL enables quadruped robots to:

- Learn policies directly from sensory input without explicit modeling.

- Adapt to dynamic environments and unstructured terrains.

- Develop emergent locomotion strategies such as trotting, turning, or jumping.

- Reduce reliance on hand-engineered controllers, allowing faster development cycles.

PyTorch, as a dynamic computation graph framework, provides flexibility for implementing DRL algorithms and integrating them with robotics simulation platforms like PyBullet, MuJoCo, and Isaac Gym.

2. Fundamentals of Deep Reinforcement Learning

2.1 Reinforcement Learning Framework

A quadruped robot can be modeled as an RL agent interacting with an environment:

- State sts_tst: Observations including joint positions, velocities, IMU data, foot contacts, and optionally terrain height maps.

- Action ata_tat: Joint torque commands, desired velocities, or foot target positions.

- Reward rtr_trt: Feedback encouraging desired behaviors such as forward motion, stability, energy efficiency, and obstacle avoidance.

- Policy πθ(at∣st)\pi_\theta(a_t|s_t)πθ(at∣st): The parameterized function mapping states to actions, often represented by a deep neural network.

- Objective: Maximize the expected cumulative reward J(θ)=E[∑tγtrt], where γ is the discount factor.

2.2 Popular DRL Algorithms for Quadruped Locomotion

- Proximal Policy Optimization (PPO): Stable, sample-efficient policy gradient method suitable for high-dimensional continuous control.

- Soft Actor-Critic (SAC): Off-policy algorithm combining entropy regularization with Q-learning for exploration and robustness.

- Deep Deterministic Policy Gradient (DDPG): Actor-critic method effective for continuous control but sensitive to hyperparameters.

- Twin Delayed DDPG (TD3): Improves DDPG stability using twin Q-networks and delayed policy updates.

PPO and SAC are widely adopted in quadruped research due to their stability in training high-dimensional locomotion policies.

3. Quadruped Robot System Design

3.1 Mechanical and Sensor Design

A quadruped robot typically consists of:

- Leg Structure: Each leg with hip, thigh, and shin joints (3 DoF).

- Actuators: Electric motors or hydraulic systems providing torque and velocity control.

- Sensors:

- IMU for orientation and acceleration

- Joint encoders for position and velocity

- Force sensors in feet for contact feedback

- Optional cameras or LIDAR for terrain mapping

3.2 Simulation Environment

Training in simulation is essential for DRL:

- Physics Engines: PyBullet, MuJoCo, Isaac Gym, and Gazebo provide realistic dynamics and collision modeling.

- Domain Randomization: Introduce variations in mass, friction, and joint parameters to improve real-world transfer.

- Observation Space Design: Include joint angles, velocities, previous actions, base orientation, and external perturbation signals.

- Action Space Design:

- Torque control: direct motor torque commands

- Position control: desired joint angles

- Velocity control: target joint velocities

4. PyTorch Implementation of DRL

4.1 Neural Network Policy Architecture

- Input Layer: Observation vector (state)

- Hidden Layers: Fully connected layers with ReLU or Tanh activations, typically 2–3 layers with 256–512 units

- Output Layer: Action vector (joint torques or positions)

- Activation Functions: Sigmoid or Tanh to bound continuous actions

Example PyTorch snippet for a policy network:

import torch

import torch.nn as nn

class PolicyNetwork(nn.Module):

def __init__(self, state_dim, action_dim):

super(PolicyNetwork, self).__init__()

self.fc1 = nn.Linear(state_dim, 512)

self.fc2 = nn.Linear(512, 512)

self.fc3 = nn.Linear(512, action_dim)

def forward(self, x):

x = torch.relu(self.fc1(x))

x = torch.relu(self.fc2(x))

return torch.tanh(self.fc3(x))

4.2 Training Loop

- Collect trajectories by running the current policy in simulation.

- Compute rewards and discounted returns.

- Update policy using DRL algorithm (e.g., PPO or SAC).

- Repeat for thousands to millions of steps until convergence.

4.3 Reward Shaping

Reward design is critical:

- Forward Progress: Reward proportional to base velocity.

- Stability: Penalize large roll/pitch deviations.

- Energy Efficiency: Penalize excessive torque or action magnitude.

- Foot Slip Avoidance: Penalize non-grounded feet sliding.

A well-shaped reward ensures learning robust and natural locomotion behaviors.

5. Domain Adaptation and Real-World Transfer

5.1 Sim-to-Real Transfer Challenges

- Model inaccuracies and unmodeled dynamics can degrade performance.

- Sensor noise and actuator latency must be considered.

5.2 Domain Randomization

- Randomize mass, friction, and motor parameters during training.

- Improves policy robustness to real-world variations.

5.3 Policy Fine-Tuning on Hardware

- Start with simulation-trained policy.

- Gradually introduce real-world perturbations.

- Apply reinforcement or imitation learning to refine performance.

5.4 Safety Considerations

- Use protective cages or tethers during early hardware trials.

- Monitor joint torque and foot contact to prevent damage.

6. Advanced Techniques in DRL for Quadrupeds

6.1 Curriculum Learning

- Begin with simple tasks (flat terrain) and gradually increase complexity (stairs, slopes, obstacles).

- Improves convergence speed and policy generalization.

6.2 Hierarchical Reinforcement Learning

- High-level policy determines gait or direction.

- Low-level controller executes joint-level motions.

- Allows modular and interpretable locomotion strategies.

6.3 Imitation Learning

- Use human teleoperation or motion capture data to bootstrap policies.

- Reduces exploration time and improves stability for complex maneuvers.

6.4 Multi-Agent and Multi-Task Learning

- Train a single policy across multiple terrains or robots.

- Improves robustness and reduces the need for task-specific retraining.

7. Case Studies

7.1 MIT Cheetah Robot

- Utilized DRL to achieve agile running and jumping.

- Learned gait patterns without explicit trajectory planning.

- Real-world experiments validated simulation policies with minimal fine-tuning.

7.2 ANYmal Quadruped

- DRL applied for terrain-adaptive locomotion.

- Policies trained with domain randomization generalized to stairs, slopes, and rocky terrains.

- Integration with perception systems allowed obstacle-aware path planning.

7.3 Open-Source Projects

- OpenAI Gym Robotics + PyBullet: Enables quadruped training for research and education.

- Isaac Gym: High-speed parallel simulation using GPU acceleration for large-scale policy training.

8. Performance Evaluation Metrics

- Velocity Tracking: Ability to maintain desired forward speed.

- Stability Metrics: Base orientation variance and fall rates.

- Energy Efficiency: Torque and power consumption per distance traveled.

- Robustness: Policy performance under external perturbations and terrain variations.

- Generalization: Performance across unseen environments.

9. Future Directions

9.1 Integration with Perception

- Visual and proprioceptive sensors for obstacle-aware locomotion.

- DRL policies incorporating depth images or point clouds.

9.2 Real-Time Adaptation

- Online learning to adapt to changing terrain or payload.

- Meta-RL approaches for rapid adaptation across tasks.

9.3 Multi-Legged Robot Collaboration

- Coordinated behaviors between multiple quadruped robots in exploration or logistics tasks.

9.4 Energy-Aware DRL

- Incorporating battery constraints and optimizing gait for prolonged operation.

Conclusion

Implementing deep reinforcement learning control of quadruped robots using PyTorch represents a significant advancement in legged robotics. DRL enables:

- Robust and adaptive locomotion in complex environments.

- Reduction of dependency on precise dynamic models.

- Emergent gait strategies optimized for stability, energy, and task performance.

By combining PyTorch-based neural networks, physics simulations, reward shaping, and domain adaptation, engineers can train quadruped robots to navigate real-world environments efficiently and safely. With advances in hierarchical RL, curriculum learning, and multi-task training, the future of quadruped robotics promises greater autonomy, agility, and practical deployment across industries.