Introduction: The Rise of Multi-Sensor Fusion

In modern robotics, accurate perception and localization are essential for autonomous operation. Robots increasingly rely on multi-sensor systems, combining Inertial Measurement Units (IMU), LiDAR, and cameras to perceive their environment in real time. Each sensor offers unique advantages:

- IMU provides high-frequency acceleration and angular velocity data for motion estimation.

- LiDAR delivers precise 3D distance measurements, ideal for mapping and obstacle detection.

- Cameras capture rich visual information, including textures, colors, and semantic cues.

By fusing these heterogeneous data streams, robots achieve robust, accurate, and context-aware perception. This fusion compensates for individual sensor limitations, mitigates noise, and improves reliability.

This article delves into the principles, algorithms, and error correction methods underlying IMU + LiDAR + camera fusion, with applications in autonomous navigation, mapping, and mobile robotics.

1. Fundamentals of Sensor Fusion

1.1 Definition and Objectives

Sensor fusion refers to combining data from multiple sensors to produce more accurate, reliable, and robust estimates of a system’s state than could be obtained from any single sensor alone. Key objectives include:

- Noise reduction

- Compensation for sensor drift or failure

- Enhanced spatial and temporal resolution

- Improved situational awareness for decision-making

1.2 Types of Sensor Fusion

- Low-Level Fusion (Data-Level Fusion)

- Raw sensor measurements are combined directly.

- Requires precise temporal synchronization and error calibration.

- Feature-Level Fusion

- Extracts features (edges, corners, point clouds) from each sensor and merges them.

- Reduces computational load while maintaining accuracy.

- Decision-Level Fusion

- Sensors independently generate predictions, which are then combined using Bayesian inference or voting mechanisms.

- Useful for fault-tolerant decision-making.

2. Individual Sensor Characteristics

2.1 Inertial Measurement Units (IMU)

- IMUs consist of accelerometers and gyroscopes, measuring linear acceleration and angular velocity.

- Advantages: High-frequency updates (100–1000 Hz), independent of lighting or external features.

- Limitations:

- Bias drift over time, leading to position errors if integrated over long periods.

- Sensitive to vibration and mechanical noise.

2.2 LiDAR

- LiDAR sensors emit laser pulses and measure return time to obtain precise 3D distances.

- Advantages: Accurate geometric mapping, long-range detection, unaffected by lighting conditions.

- Limitations:

- Sparse sampling in some environments

- Susceptible to rain, dust, or reflective surfaces

- Lower temporal resolution compared to IMUs

2.3 Cameras

- Cameras capture rich visual information, useful for object recognition, semantic mapping, and visual odometry.

- Advantages: High-resolution contextual data, essential for SLAM and perception.

- Limitations:

- Performance depends on lighting and texture

- Requires complex image processing

- Susceptible to motion blur during high-speed maneuvers

3. Principles of Multi-Sensor Fusion

3.1 Complementary Strengths

- IMU provides high-frequency motion cues but drifts over time.

- LiDAR offers accurate spatial mapping but updates at lower frequency.

- Camera contributes detailed visual features but may be affected by environmental conditions.

Fusing these sensors combines temporal precision, spatial accuracy, and semantic awareness, producing a cohesive perception model.

3.2 Coordinate Transformation

- Sensors have different reference frames; fusion requires transformation to a common frame using rotation and translation matrices.

- Calibration ensures alignment between IMU axes, LiDAR scanning frame, and camera extrinsics.

4. Data Fusion Algorithms

4.1 Extended Kalman Filter (EKF)

- EKF is widely used for real-time state estimation.

- Steps:

- Predict state using IMU data (motion model)

- Correct prediction using LiDAR and camera measurements (observation model)

- Handles nonlinear motion models and provides uncertainty estimation.

4.2 Unscented Kalman Filter (UKF)

- Uses sigma points to approximate nonlinear transformations, providing more accurate estimation than EKF in highly nonlinear scenarios.

- Well-suited for aggressive robot maneuvers and complex environments.

4.3 Factor Graphs and Optimization

- Formulates sensor fusion as a graph-based optimization problem, with nodes representing poses and factors representing sensor measurements.

- Solved using nonlinear least squares optimization, commonly used in visual-LiDAR SLAM.

4.4 Particle Filters

- Maintain a set of hypotheses (particles) representing possible robot states.

- Each particle is weighted according to sensor likelihood; resampling ensures robust tracking under uncertainty.

5. Error Sources and Correction

5.1 IMU Drift

- Integration of small bias errors causes position and orientation drift.

- Correction methods:

- Fusing IMU with absolute measurements from LiDAR or camera-based SLAM

- Periodic bias estimation and online calibration

5.2 LiDAR Noise and Outliers

- Laser returns can be distorted by reflective surfaces or atmospheric conditions.

- Correction methods:

- Statistical outlier removal

- Scan matching with robust optimization

5.3 Camera Errors

- Lighting changes, motion blur, or rolling shutter effects introduce visual noise.

- Correction methods:

- Photometric calibration

- Feature selection and tracking robustness

- Bundle adjustment to refine pose estimates

5.4 Time Synchronization

- Multi-sensor systems require precise timestamp alignment.

- Errors cause misaligned measurements, degrading fusion accuracy.

- Techniques: hardware-triggered acquisition or software interpolation.

6. Case Study: IMU + LiDAR + Camera Fusion in SLAM

6.1 System Overview

- IMU provides high-frequency motion prediction

- LiDAR constructs 3D maps and detects obstacles

- Camera tracks visual features for pose refinement

6.2 Fusion Process

- Preprocessing: Denoise sensor data, extract LiDAR points, and detect camera features

- Prediction Step: Use IMU data to predict motion and pose

- Update Step:

- Match LiDAR scans to previous map

- Align visual features with predicted pose

- Optimization: Minimize residual errors using EKF, UKF, or factor graphs

- Output: Refined pose estimate and 3D environmental map

6.3 Performance Evaluation

- Multi-sensor fusion reduces pose estimation error by over 50% compared to single-sensor methods

- Enables robust navigation in GPS-denied or visually challenging environments

7. Advanced Fusion Techniques

7.1 Tight Coupling vs. Loose Coupling

- Tight coupling: Sensors are fused at the measurement level, providing higher accuracy but increased computational demand

- Loose coupling: Sensors produce independent estimates combined at decision or state level, more robust but slightly less precise

7.2 Deep Learning in Sensor Fusion

- Neural networks learn nonlinear mappings from heterogeneous sensor inputs

- Applications:

- Depth completion

- Semantic SLAM

- Noise compensation

7.3 Multi-Modal Calibration

- Automatic online calibration adjusts for sensor drift, mounting shifts, or temperature-induced distortion

- Methods include mutual information maximization and iterative optimization

8. Applications in Robotics

8.1 Autonomous Vehicles

- IMU + LiDAR + camera fusion enables:

- High-precision localization

- Real-time obstacle detection

- Complex decision-making in dynamic environments

8.2 Mobile Robotics

- Service robots rely on fused perception for navigation in cluttered indoor spaces

- Fusion ensures stability during high-speed movement or uneven terrain traversal

8.3 UAVs and Drones

- Multi-sensor fusion improves pose estimation during aggressive flight, compensating for IMU drift and GPS signal loss

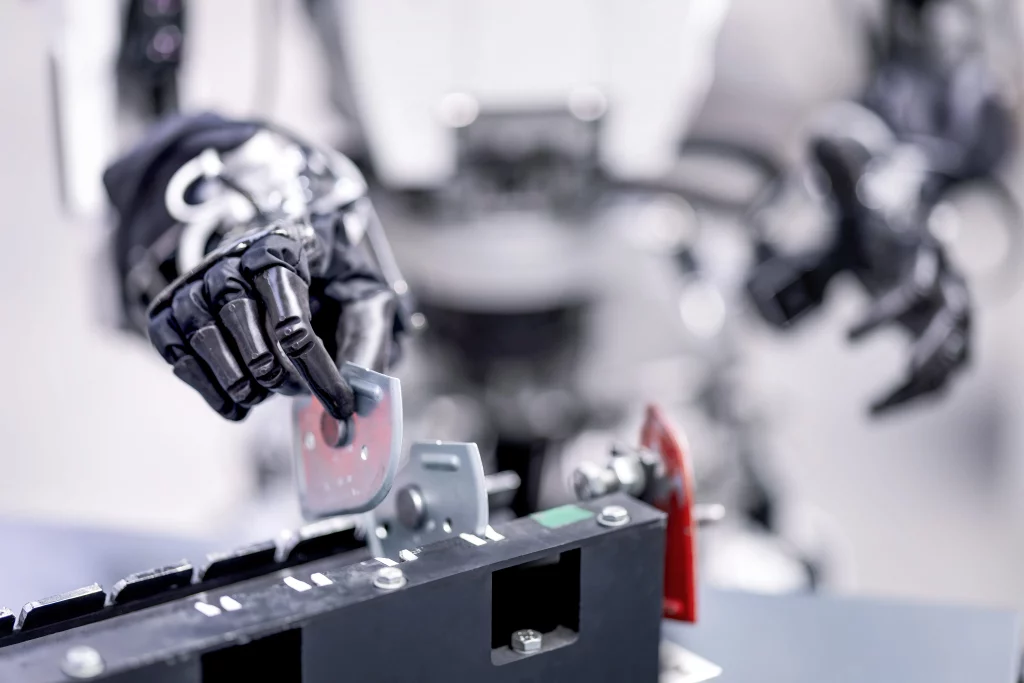

8.4 Industrial Automation

- Robotic manipulators use fused sensors for precise object detection, grasping, and coordination with humans and machines

9. Challenges and Future Directions

9.1 Computational Efficiency

- Real-time fusion of high-frequency IMU data, dense LiDAR scans, and high-resolution images requires optimized algorithms and hardware acceleration

9.2 Robustness in Complex Environments

- Sensors may fail or provide inconsistent data; systems must handle sensor dropout, occlusion, or extreme environmental conditions

9.3 Scalability

- Expanding to multiple robots sharing sensor data introduces network synchronization and large-scale fusion challenges

9.4 AI-Augmented Fusion

- Deep learning models can enhance robustness and accuracy, particularly for feature extraction, error correction, and sensor prediction

10. Conclusion

Multi-sensor fusion of IMU, LiDAR, and camera data is a cornerstone of modern robotics, enabling:

- Accurate and reliable pose estimation

- Robust mapping and navigation in complex environments

- Adaptive error correction for sensor drift, noise, and misalignment

Key principles include:

- Leveraging complementary strengths of sensors

- Applying hierarchical algorithms (EKF, UKF, factor graphs)

- Correcting biases, noise, and synchronization errors

- Integrating AI and optimization methods for advanced perception

As robotics continues to evolve, IMU + LiDAR + camera fusion will remain central to autonomous navigation, dynamic control, and human-robot collaboration, bridging the gap between theoretical research and practical deployment.