Introduction: The Evolution of Robot Motion Control

Modern robotics increasingly demands precise, adaptive, and intelligent motion control. Whether in humanoid robots performing complex maneuvers, industrial arms executing high-speed assembly, or mobile robots navigating unstructured environments, the integration of dynamics control and motion planning has become central to robotic performance.

Traditional linear controllers often fall short when handling nonlinear robot dynamics, environmental uncertainties, or multi-degree-of-freedom interactions. To address these challenges, three complementary approaches dominate current research and applications:

- Nonlinear Control: Handles inherent nonlinearities in robot kinematics and dynamics.

- Predictive Control: Anticipates future states to optimize motion trajectories.

- Reinforcement Learning (RL): Allows robots to learn complex behaviors through trial-and-error interaction with their environment.

This article provides a comprehensive analysis of these approaches, emphasizing principles, methods, applications, and comparative insights.

1. Fundamentals of Robot Dynamics

1.1 Kinematics and Dynamics

- Kinematics describes motion without considering forces, focusing on joint positions, velocities, and accelerations.

- Dynamics incorporates forces, torques, and inertial effects, governed by Newton-Euler or Lagrangian formulations.

- Key equations:

M(q)q¨+C(q,q˙)q˙+G(q)=τ

Where:

- q: Joint positions

- M(q): Mass/inertia matrix

- C(q,q˙): Coriolis/centrifugal forces

- G(q): Gravitational torque

- τ: Joint torque input

1.2 Challenges in Dynamics Control

- Nonlinear coupling: Interactions among joints produce nonlinear effects that complicate control.

- External disturbances: Contact forces, payload changes, and environment variations require robust control strategies.

- High-dimensional systems: Humanoids and manipulators often have many degrees of freedom (DoFs), increasing computational complexity.

2. Nonlinear Control Techniques

Nonlinear control explicitly addresses nonlinearities in robot dynamics, offering better stability and performance compared to linearized approaches.

2.1 Feedback Linearization

- Cancels nonlinear terms to reduce dynamics to a linear system.

- Control law example:

τ=M(q)v+C(q,q˙)q˙+G(q)

- Advantages: Simplifies trajectory tracking.

- Limitations: Requires precise modeling; sensitive to parameter uncertainty.

2.2 Sliding Mode Control (SMC)

- Introduces a sliding surface in state space; drives the system toward it despite disturbances.

- Robust against model uncertainties and external perturbations.

- Widely applied in robot manipulators and mobile robots.

2.3 Adaptive Nonlinear Control

- Adjusts controller parameters online to compensate for unknown dynamics or payload variations.

- Example: Adaptive computed torque control for robotic arms with uncertain mass distribution.

2.4 Passivity-Based Control

- Leverages energy principles to ensure system stability.

- Common in underactuated robots, exoskeletons, and legged locomotion.

3. Predictive Control in Robotics

Predictive control leverages a model of the robot to optimize future trajectories over a finite horizon.

3.1 Model Predictive Control (MPC)

- Formulates motion planning as a constrained optimization problem:

u(t)mink=0∑N∥xk−xref,k∥Q2+∥uk∥R2

- Constraints: joint limits, collision avoidance, torque limits.

- Advantages:

- Handles multi-variable systems

- Incorporates constraints explicitly

- Optimizes performance over a future horizon

3.2 Applications in Robotics

- Humanoid locomotion: MPC generates stable walking patterns while avoiding falls.

- Manipulators: Optimizes joint trajectories for collision-free, energy-efficient paths.

- Mobile robots: Combines path planning and obstacle avoidance in dynamic environments.

3.3 Real-Time Considerations

- Requires efficient solvers for high-dimensional systems.

- Recent advances: quadratic programming (QP) solvers, sparse optimization, and GPU acceleration.

4. Reinforcement Learning in Motion Control

Reinforcement Learning (RL) enables robots to learn control policies through interaction with the environment.

4.1 Basic Concepts

- Robot (agent) observes state s, takes action a, receives reward r, and updates policy π(a∣s).

- Goal: maximize cumulative reward over time:

J(π)=E[t=0∑∞γtrt]

Where γ is the discount factor.

4.2 Algorithms

- Policy Gradient Methods: Directly optimize action distributions (e.g., PPO, TRPO).

- Value-Based Methods: Learn value functions to select actions (e.g., DQN).

- Actor-Critic Methods: Combine policy and value functions for stability and efficiency.

4.3 Applications

- Legged robots: RL enables dynamic locomotion and jumping beyond analytic models.

- Robotic manipulation: Grasping, object manipulation, and in-hand reorientation.

- Adaptive control: Robots learn to compensate for unknown dynamics and disturbances.

4.4 Challenges

- High-dimensional state-action spaces

- Sample inefficiency

- Safety in real-world deployment (often mitigated with simulation-to-real transfer)

5. Integration of Nonlinear, Predictive, and RL Approaches

Modern robotics increasingly combines model-based and learning-based approaches:

- Nonlinear control provides stability guarantees.

- MPC ensures constraint satisfaction and trajectory optimization.

- RL enables adaptive behavior and exploration in uncertain environments.

5.1 Hybrid Architectures

- Example:

- RL generates reference trajectories for high-level tasks

- MPC refines trajectories considering constraints

- Nonlinear controllers handle torque-level actuation

- Benefits: robust, adaptive, and high-performance motion control

6. Practical Considerations

6.1 Hardware Requirements

- Real-time computation for MPC and RL inference

- High-bandwidth sensors and low-latency actuators

- Sufficient onboard memory and GPU/FPGA acceleration

6.2 Safety and Reliability

- Controller must handle unexpected disturbances and model inaccuracies

- Fail-safe strategies: emergency stop, fallback to conservative policies, or energy-based safety envelopes

6.3 Evaluation Metrics

- Tracking error: deviation from desired trajectory

- Energy efficiency: torque and power consumption

- Robustness: performance under external perturbations

- Adaptability: ability to handle payload or environment changes

7. Case Studies

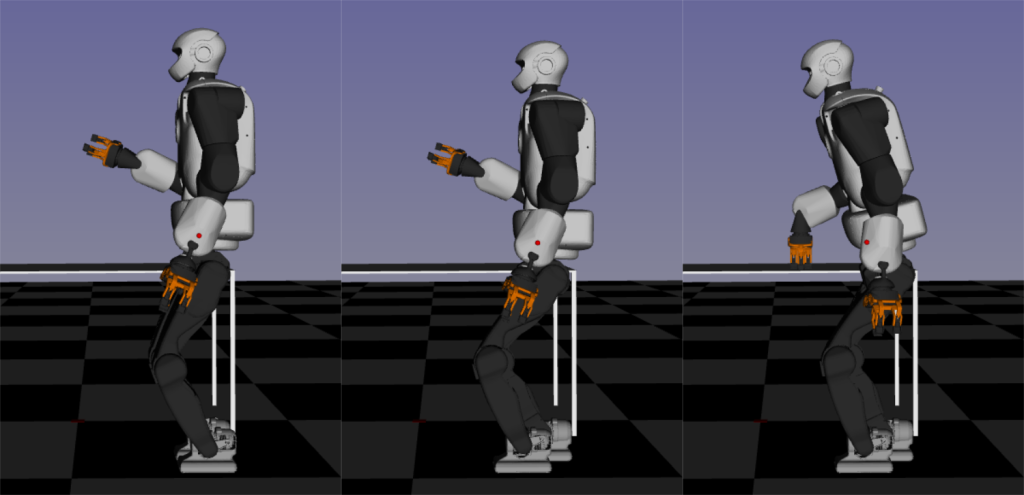

7.1 Atlas Humanoid (Boston Dynamics)

- Combines MPC for balance and nonlinear control for joint torque tracking

- Performs dynamic actions like jumping, running, and obstacle navigation

- Reinforcement learning enables adaptive strategies for disturbances and uneven terrain

7.2 Robotic Manipulators in Industry

- MPC ensures collision-free motion and torque constraints

- Nonlinear controllers handle high-speed, high-precision joint trajectories

- RL increasingly explored for adaptive grasping and assembly tasks

7.3 Mobile Robots in Dynamic Environments

- MPC-based path planning adapts to moving obstacles

- RL provides robust navigation strategies under sensor uncertainty

8. Future Directions

8.1 Safe Reinforcement Learning

- Incorporates safety constraints into learning process to prevent damage during exploration

8.2 Real-Time Optimization Advances

- GPU and FPGA acceleration enable high-frequency MPC and online learning for high-DoF robots

8.3 Learning-Enhanced Predictive Control

- Combining data-driven model identification with MPC improves trajectory prediction in uncertain environments

8.4 Multi-Agent Motion Planning

- Coordination among multiple robots using hybrid nonlinear-predictive-RL control for swarm and collaborative applications

9. Strategic Implications

- Industrial automation: Combining nonlinear control, MPC, and RL allows flexible adaptation to product variations

- Service robotics: Enables humanoids to safely navigate complex human environments

- Research and innovation: Hybrid control strategies accelerate development of legged locomotion, dexterous manipulation, and autonomous navigation

10. Conclusion

Dynamics control and motion planning are central to modern robotics, where precision, adaptability, and intelligence define system performance. Key insights include:

- Nonlinear control ensures stability and robustness against dynamic complexities.

- Predictive control optimizes trajectories while respecting constraints.

- Reinforcement learning enables adaptive, high-level decision-making in uncertain environments.

- Hybrid approaches integrating model-based and learning-based methods offer the best of both worlds.

By combining these methods, robotics systems can achieve robust, flexible, and intelligent motion, enabling humanoids, manipulators, and mobile robots to perform complex real-world tasks safely and efficiently.

The future of robotics lies in adaptive, predictive, and learning-driven control architectures, bridging the gap between mechanical capability and cognitive intelligence.