Introduction

In robotics, navigation and control depend critically on a robot’s ability to perceive its environment accurately and reliably. This capability is rooted in sensor performance: how well sensors detect obstacles, understand spatial relationships, and provide feedback for real‑time decision‑making. Among the most important sensors for advanced robotic navigation and control are LiDAR (Light Detection and Ranging), depth cameras, and tactile sensors—each contributing unique strengths and covering gaps left by others. Together, they enable robots to localize, map, avoid obstacles, and interact safely with their surroundings across diverse domains from autonomous vehicles and mobile robots to humanoids and robotic manipulators.

This article offers a comprehensive professional analysis of these sensing technologies, their characteristics, how they contribute to robot perception and control, their integration via sensor fusion, and the design and performance considerations that make them indispensable for modern robotics.

1. The Role of Sensors in Robotic Navigation and Control

1.1 Perception as the Foundation of Autonomy

Robotic autonomy hinges on the ability to sense, interpret, and respond to the world. Sensors act as the robot’s “eyes,” “skin,” and sometimes even its “spatial intuition.” A robot’s perceptual system supports:

- Localization: Determining where the robot is relative to the world

- Mapping: Building representations of the environment (e.g., 3D maps)

- Obstacle avoidance: Detecting static and dynamic obstacles

- Path planning: Computing safe routes based on current and predicted states

- Task execution: Using tactile feedback to fine‑tune manipulative actions

Without reliable sensing, navigation becomes blind guesswork and control loops destabilize, especially in unstructured or dynamic environments where uncertainty and change are the norm.

1.2 Sensor Fusion: Toward Robust Perception

Single sensors have limitations; for example, cameras can struggle in low light, and LiDAR can miss transparent obstacles. Sensor fusion combines data from multiple modalities to form a coherent, redundant perception model that is far more robust than any single source. Hybrid systems can maintain navigation and control precision even under adverse conditions where one sensor type degrades.

2. LiDAR: Accurate Active Sensing for Spatial Awareness

2.1 What Is LiDAR?

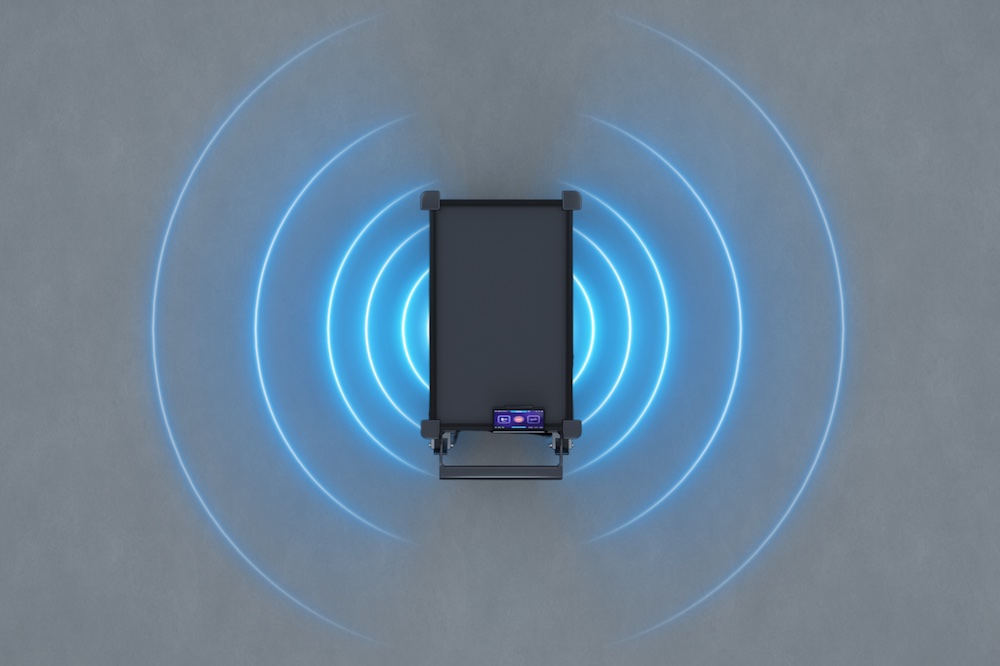

LiDAR operates by emitting laser pulses and measuring the time it takes for them to return after reflecting off surfaces. By calculating time‑of‑flight (ToF) or using frequency‑modulated continuous wave (FMCW) techniques, LiDAR builds dense three‑dimensional point clouds that describe the shape, distance, and often motion of nearby objects. This enables high‑precision spatial awareness critical for navigation.

2.2 Why LiDAR Is Valuable for Navigation and Control

LiDAR delivers:

- High‑precision range measurements across wide fields of view

- Geometric information with accurate distance and shape data

- Reliable obstacle detection in diverse lighting conditions

- Large dynamic range when using advanced solid‑state designs

For example, LiDAR is widely used in autonomous ground vehicles and mobile robots to build real‑time maps and avoid collisions by continuously scanning the environment around the robot. Its active nature (emitting its own signals) makes it less susceptible to ambient light variations that can affect cameras.

Key performance attributes that affect LiDAR effectiveness include:

- Angular resolution and scanning rate: Influences detail and temporal responsiveness

- Range accuracy: Higher accuracy yields better long‑range perception and safer navigation

- Environmental robustness: Solid‑state designs are driving down cost and improving durability, making LiDAR more practical for commercial robots in outdoor and indoor use cases.

2.3 Limitations and Complementary Needs

Despite its strengths, LiDAR has challenges:

- Transparent or highly reflective surfaces can confound laser returns

- Cost and size: Historically higher than some camera systems

- Data complexity: Requires significant processing for real‑time usage

These limitations highlight why LiDAR is often paired with other sensors like cameras and tactile systems in robust perception architectures.

3. Depth Cameras: Rich Scene Understanding

3.1 What Are Depth Cameras?

Depth cameras measure distance information per pixel, creating a depth map that associates every image pixel with a real‑world distance. Common types include:

- Structured light systems

- Time‑of‑Flight (ToF) depth cameras

- Stereo vision systems

Depth cameras provide rich scene perception by combining traditional RGB imaging with precise distance acquisition.

3.2 Advantages of Depth Cameras

Depth cameras excel at:

- Dense mapping of nearby scenes: Useful for indoor navigation and manipulation tasks

- Object recognition and segmentation: RGB information combined with depth supports both semantic and geometric understanding

- Obstacle avoidance: Robots can perceive both shape and position in cluttered spaces

They are particularly valuable in environments where 3D structure around the robot must be understood at close to medium ranges (e.g., robotic arms or service robots in buildings).

3.3 Practical Limitations

Depth sensors have constraints:

- Ambient light sensitivity: Lower performance in strong sunlight for some designs

- Range limitations: Many depth cameras struggle at long range compared with high‑end LiDAR

- Data noise: Reflective surfaces or motion blur can introduce artifacts

Despite these limitations, depth cameras are central to many simultaneous localization and mapping (SLAM) systems used in robot navigation stacks for both mobility and manipulation tasks.

4. Tactile Sensors: Touch as a Navigation and Control Aid

4.1 What Is Tactile Sensing in Robotics?

Tactile sensors provide robots with touch‑based feedback, similar to human skin. Tactile sensing measures contact forces, pressure distribution, and sometimes texture or shear forces. Researchers are increasingly exploring tactile sensors that also offer proximity cues—blending near‑field and contact sensing for continuous interaction feedback.

4.2 Tactile Sensing for Precise Interaction and Control

Tactile sensing contributes to navigation and control in important ways:

- Surface contact confirmation: Knowing when and how a robot touches an object improves manipulation accuracy.

- Slip detection and grip regulation: Essential for robust manipulation while moving.

- Obstacle indication in blind spots: In situations where LiDAR or cameras cannot detect small bumpers or edges because of geometry or occlusion, tactile feedback can guide the robot to adjust movement. This helps avoid navigation failures due to blind spots.

4.3 Advancements in Tactile Sensors

Recent work on multi‑modal tactile sensors integrates visual and tactile modes, enabling robots to sense both near‑field distance and contact geometry with high resolution. These innovations improve the robot’s ability to perform fine‑manipulation tasks and maintain controlled interaction with complex environments.

5. Sensor Integration and Fusion for Navigation and Control

5.1 The Case for Multi‑Sensor Fusion

No single sensor type is sufficient for comprehensive perception in all environments; each has limitations that others can help cover. Sensor fusion strategies leverage:

- LiDAR for robust range and shape perception

- Depth cameras for dense, detailed scene understanding

- Tactile sensors for contact feedback and blind‑spot correction

By combining these through fusion algorithms, robots can generate reliable environmental models and improve decision‑making robustness.

5.2 Typical Fusion Techniques

Typical robotic sensor fusion architectures include:

- Kalman filter or particle filter based SLAM for integrating odometry, LiDAR, and IMU

- Deep learning based multi‑modal fusion that learns feature representations across camera and depth data

- Real‑time control loops that incorporate tactile feedback for fine interaction adjustments

These approaches allow robots to:

- Localize accurately while mapping

- Plan paths that avoid obstacles dynamically

- Control grip and motion during manipulation

Effective fusion significantly improves navigation accuracy, control reliability, and safety, supporting autonomous operation in complex real‑world scenarios.

6. Practical Considerations for Sensor Performance

6.1 Resolution and Range

Performance metrics such as resolution, range, and field of view directly influence navigation:

- High resolution in depth cameras enables precise geometric understanding

- Extended LiDAR range enhances long‑distance obstacle detection

- Wide fields of view reduce blind spots

Designers must match sensor specifications to their use case; for example, mobile robots need wide field sensors for 360° awareness.

6.2 Processing and Latency

High data rates from LiDAR and cameras require real‑time processing. Sensor performance therefore includes not just sensing accuracy but also computational efficiency:

- Efficient onboard processing is crucial for low‑latency control loops

- Edge computing and AI accelerators help meet real‑time constraints

Latency impacts a robot’s ability to make timely navigation and control decisions, especially in dynamic environments.

6.3 Environmental Robustness

Environmental robustness—such as resistance to dust, light variation, reflective surfaces, and occlusions—is another key performance dimension. A navigation system must maintain reliability despite changes in:

- Lighting conditions (indoor vs. outdoor)

- Reflective surfaces

- Weather conditions

Sensor suites and fusion strategies must account for these environmental challenges to ensure consistent performance.

7. Case Studies: Sensor‑Driven Navigation Systems

7.1 Autonomous Mobile Robots in Logistics

In warehouse environments, autonomous mobile robots (AMRs) use LiDAR for overhead mapping and ground‑level obstacle avoidance, combined with depth cameras for close proximity sensing around shelves and dynamic objects. Tactile bump sensors provide last‑mile contact information around shelving units to avoid collisions.

7.2 Humanoid Robots in Dynamic Environments

Humanoid robots tasked with complex service tasks integrate multi‑modal sensing:

- LiDAR for spatial awareness and whole‑body navigation

- Depth cameras for object recognition and hand‑object interaction planning

- Tactile sensors in end‑effectors for safe, responsive grips

This multi‑sensor integration is key to performing interactive tasks in human spaces.

Conclusion

For modern robotic systems—whether autonomous vehicles, mobile robots, or humanoid platforms—sensor performance is a cornerstone of effective navigation and control. LiDAR provides accurate spatial quantification; depth cameras deliver dense 3D scene information; and tactile sensors close the gap at the point of contact. Each sensor type contributes unique strengths and compensates for the limitations of others, making multi‑sensor fusion an essential strategy for building robust, adaptable perception systems.