Abstract

Bipedal robots, designed to emulate human locomotion, present one of the most complex challenges in robotics: dynamic balance and stability. Traditional control methods, based on classical feedback and model-based approaches, struggle to handle unpredictable environments, disturbances, and real-world interactions. Recent breakthroughs in Deep Reinforcement Learning (DRL) have transformed the landscape, enabling bipedal robots to achieve stable walking, dynamic balancing, and adaptive responses to external perturbations. This article provides a comprehensive, professional analysis of DRL applications in bipedal balance control, exploring algorithmic foundations, simulation and real-world implementation, sensor integration, reward engineering, and future directions. The discussion emphasizes how DRL overcomes prior limitations and outlines market, industrial, and research implications.

1. Introduction

Bipedal robots have fascinated researchers due to their human-like locomotion capabilities and potential applications:

- Service robotics: Human-assistive robots, delivery robots, and reception robots.

- Industrial logistics: Robots navigating complex, uneven terrains in warehouses and factories.

- Healthcare and rehabilitation: Robotic exoskeletons, prosthetics, and training aids.

- Exploration and disaster response: Robots operating in environments designed for humans.

Despite decades of research, achieving robust balance during dynamic movement remains challenging. Disturbances, uneven terrain, and sudden external forces often cause instability, making real-world deployment difficult.

Traditional control methods, including PID controllers, Zero Moment Point (ZMP) approaches, and model predictive control (MPC), rely on precise dynamic models and constrained assumptions. While effective in controlled environments, these methods lack adaptability to unstructured, dynamic situations.

Deep Reinforcement Learning introduces a paradigm shift by enabling robots to learn optimal control policies through trial and error, without requiring explicit mathematical models of the environment. DRL has demonstrated remarkable breakthroughs in bipedal balance, enabling robots to handle push recovery, slope navigation, and terrain irregularities.

2. Bipedal Robot Dynamics and Balance Challenges

2.1 Complex Dynamics

- Bipedal robots are underactuated systems with fewer actuators than degrees of freedom.

- Multi-joint interactions, variable Center of Mass (CoM), and non-linear dynamics create high control complexity.

- Small disturbances can propagate rapidly, causing instability or falls.

2.2 Traditional Control Limitations

- Model-based approaches require accurate dynamic parameters (mass, inertia, joint friction).

- Feedback controllers struggle with unmodeled disturbances or sensor noise.

- Trajectory planning is rigid and fails to adapt to unpredictable terrain or external forces.

2.3 Requirements for Robust Balance Control

- Real-time adaptation to disturbances

- Handling uneven or dynamic terrain

- Energy-efficient locomotion

- Smooth and human-like motion

- Generalization across environments and tasks

3. Fundamentals of Deep Reinforcement Learning (DRL)

3.1 Reinforcement Learning Overview

- Agents learn to maximize cumulative rewards by interacting with the environment.

- Key components:

- State (s): Observed robot configuration (joint angles, velocities, CoM, IMU readings).

- Action (a): Joint torque, velocity commands, or target positions.

- Reward (r): Feedback for desirable behaviors (e.g., maintaining upright posture).

- Policy (π): Mapping from states to actions.

- Value function (V): Expected cumulative reward from a given state.

3.2 Deep Reinforcement Learning

- Uses deep neural networks to approximate policies or value functions.

- Handles high-dimensional inputs, including sensor data, joint states, and vision.

- Common algorithms for bipedal balance:

- Proximal Policy Optimization (PPO)

- Deep Deterministic Policy Gradient (DDPG)

- Soft Actor-Critic (SAC)

- Trust Region Policy Optimization (TRPO)

3.3 Advantages for Bipedal Robots

- Does not require precise dynamic models.

- Learns robust control strategies through simulated interactions.

- Generalizes across varying terrains, loads, and disturbances.

- Integrates multiple sensory modalities for perception-based control.

4. DRL-Based Bipedal Balance Architectures

4.1 Observation Space

- Joint angles and velocities: Provide proprioceptive feedback.

- IMU readings: Measure orientation and angular velocity.

- Foot contact sensors: Detect terrain interactions.

- Optional vision input: For terrain anticipation in dynamic environments.

4.2 Action Space

- Joint torques: Direct control of motors.

- Joint position targets: Suitable for position-controlled actuators.

- Hybrid commands: Combining torque and positional control for flexible adaptation.

4.3 Reward Engineering

- Critical for learning stable and energy-efficient locomotion.

- Typical reward components:

- Upright posture maintenance

- Forward velocity tracking

- Energy efficiency penalties

- Fall avoidance penalties

- Smooth joint motion encouragement

4.4 Training in Simulation

- Simulators (MuJoCo, PyBullet, Gazebo) accelerate learning by providing:

- High-speed trials

- Safe exploration without physical damage

- Adjustable terrain, disturbances, and robot parameters

- Domain randomization ensures policies transfer from simulation to real robots (sim-to-real).

5. Breakthroughs in Bipedal Balance Control

5.1 Dynamic Push Recovery

- DRL-trained robots can withstand external pushes without falling.

- Learned behaviors include:

- Ankle strategies for small disturbances

- Hip strategies for moderate pushes

- Step strategies for large forces

5.2 Uneven Terrain Navigation

- DRL enables robots to adapt foot placement in real-time.

- Successful policies handle slopes, stairs, and soft or irregular surfaces.

- Vision-based policies anticipate terrain changes and adjust balance proactively.

5.3 Energy-Efficient Walking

- Policies balance stability with energy consumption.

- DRL encourages minimal joint torque use while maintaining upright posture.

- Resulting motion closely resembles human-like locomotion.

5.4 Multi-Task Generalization

- Single DRL policies can handle multiple tasks:

- Walking forward/backward

- Side-stepping

- Turning

- Disturbance recovery

- Reduces the need for task-specific controllers.

6. Real-World Implementations

6.1 Laboratory Prototypes

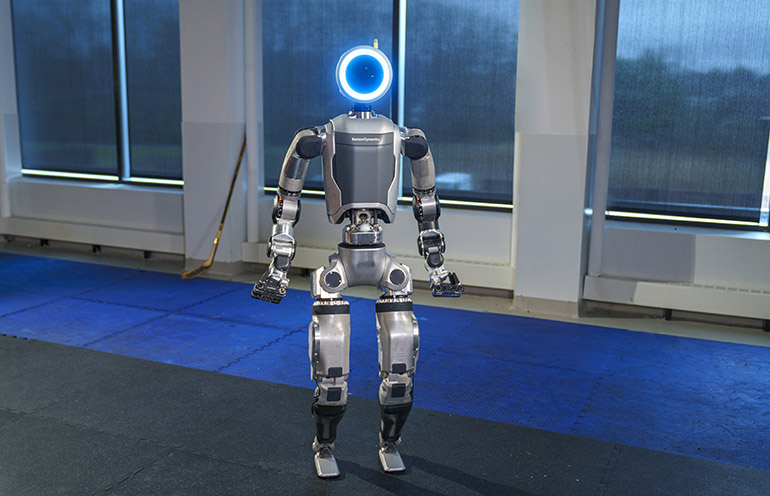

- DRL has enabled bipedal robots like Digit, Atlas, and Cassie to:

- Walk dynamically

- Navigate uneven terrain

- Recover from disturbances autonomously

6.2 Industrial and Service Applications

- Humanoid robots in logistics warehouses leverage DRL for balance while carrying payloads.

- Service robots maintain stability during human interaction and object manipulation.

6.3 Integration with Sensor Networks

- IMUs, force sensors, and LiDAR allow robots to adjust balance in real-time.

- Multi-sensor fusion policies outperform single-sensor strategies.

7. Challenges and Limitations

7.1 Sim-to-Real Transfer

- Policies trained in simulation may fail on real robots due to unmodeled dynamics.

- Solutions include:

- Domain randomization

- System identification

- On-policy fine-tuning in real hardware

7.2 Sample Efficiency

- DRL requires millions of iterations to converge.

- Model-based DRL and imitation learning improve efficiency.

7.3 Safety and Robustness

- Exploration in physical environments risks damage to robots.

- Safe DRL techniques (constraint-aware policies) mitigate risks.

7.4 Interpretability

- DRL policies are often black-box neural networks.

- Understanding decision-making is critical for safety certification and regulatory approval.

8. Future Directions

8.1 Hierarchical Reinforcement Learning

- Combines high-level decision-making with low-level controllers for modular policy design.

- Enhances interpretability and generalization.

8.2 Sim-to-Real via Digital Twins

- Digital twins enable continuous online learning and adjustment.

- Policies adapt dynamically to changing real-world conditions.

8.3 Multi-Robot Balance Coordination

- DRL can extend to cooperative bipedal robots in shared environments.

- Applications include coordinated lifting, collaborative tasks, and swarm locomotion.

8.4 Integration with Soft Robotics

- Combining DRL with compliant actuators enhances safety and human-robot interaction.

- Soft joints and variable stiffness allow adaptive balance in unstructured terrains.

9. Industrial and Research Implications

- Manufacturing: DRL-powered humanoid robots can operate alongside humans, improving efficiency.

- Healthcare: Robotic exoskeletons leverage DRL for patient support and dynamic balance.

- Service Industry: Hotel, retail, and delivery robots maintain stability in unpredictable human environments.

- Scientific Research: DRL methods advance understanding of locomotion, control, and neural network applications in robotics.

10. Market and Commercial Prospects

10.1 Growth Drivers

- Labor shortages and automation demand

- Advances in AI and sensor technologies

- Demand for autonomous, adaptive humanoid robots in diverse sectors

10.2 Adoption Challenges

- High development costs

- Hardware reliability and maintenance

- Regulatory and safety compliance

10.3 Outlook

- DRL breakthroughs make bipedal robots commercially viable within the next 5–10 years.

- Integration with RaaS models can accelerate adoption in logistics, healthcare, and service sectors.

11. Conclusion

Deep Reinforcement Learning has achieved remarkable breakthroughs in bipedal robot balance control, enabling:

- Dynamic push recovery

- Adaptive terrain navigation

- Energy-efficient locomotion

- Multi-task generalization

These advances overcome key limitations of traditional model-based controllers, offering robustness, adaptability, and real-world applicability. While challenges remain—such as sample efficiency, safety, sim-to-real transfer, and interpretability—ongoing research and industrial integration point toward a future where humanoid robots can operate reliably in human-centered environments.

DRL-driven bipedal balance not only advances robotics but also serves as a model for autonomous control in other dynamic, underactuated systems, setting the stage for a new era of intelligent, adaptive machines.