Introduction: A New Epoch in Robotics and AI Integration

The emergence of Gemini Robotics — a family of advanced artificial intelligence models developed by Google DeepMind — marks a pivotal shift in robotics technology. Traditionally, robots were constrained to highly structured environments and tightly scripted motions. They struggled to interpret open‑ended instructions or adapt dynamically to unfamiliar real‑world scenarios. Gemini Robotics breaks this boundary by enabling robots to perceive their surroundings, interpret natural language instructions, reason about spatial and temporal contexts, and execute complex actions autonomously.

This comprehensive article explores how Gemini Robotics has become a core technology direction in robotics, driving progress toward truly general robotic agents capable of understanding physical environments and performing long‑horizon, multi‑step tasks across diverse embodiments and use cases. We examine the underlying technical principles, key capabilities, industry significance, major breakthroughs, practical applications, and future prospects for this transformative technology.

1. Origins of Gemini Robotics: Extending Gemini AI to the Physical World

1.1 From Language Models to Physical Agents

Gemini Robotics builds on the foundation of Gemini 2.0, a sophisticated multimodal AI developed by Google DeepMind. While Gemini excels in understanding text, images, and audio in digital contexts, Gemini Robotics extends this intelligence into embodied agents interacting with the physical world.

Rather than merely recognizing objects or navigating simple obstacles, Gemini Robotics enables robots to:

- Understand scenes and objects in both 2D and 3D, including relationships and spatial affordances.

- Interpret natural language instructions and decompose them into concrete sub‑tasks.

- Generate action plans and execute them through motor commands.

This represents a fundamental advance beyond earlier perception‑only models, establishing a tight integration between cognition and physical action.

1.2 Dual‑Model Architecture: ER and VLA Synergy

A key innovation in Gemini Robotics is its dual‑model architecture, which separates high‑level planning from physical execution:

- Gemini Robotics‑ER 1.5 (Embodied Reasoning): A vision‑language model that performs spatial reasoning, long‑horizon planning, and contextual decision‑making. This model can take an instruction like “sort recyclables from trash” and break it into actionable steps.

- Gemini Robotics 1.5 (Vision‑Language‑Action, or VLA): A model that translates visual inputs and language into precise action commands for robotic actuators. It enables robots to carry out tasks like grasping objects or manipulating tools.

The combination of these models enables agentic behavior — where robots not only act but think before acting, assess alternatives, and adjust plans on the fly.

2. Core Capabilities That Redefine Robotic Intelligence

2.1 Integrated Perception, Reasoning, and Action

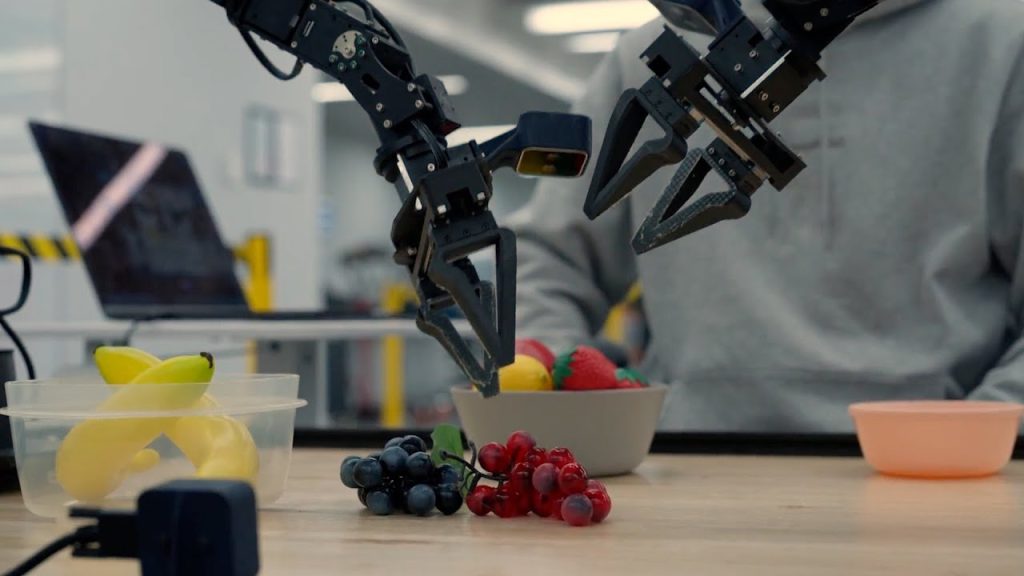

Gemini Robotics integrates vision, language, and physical control — often referred to as a Vision‑Language‑Action (VLA) framework — enabling robots to interpret complex environments and translate human instructions into multi‑stage actions.

For example, when a user says “prepare a simple snack with these ingredients”, a Gemini‑powered robot can:

- Detect and identify objects relevant to the task (e.g., bread, fruit, utensils).

- Plan a sequence of manipulation actions (e.g., pick up bread, apply spread, place on plate).

- Execute precise motor commands to fulfill each step.

- Adapt dynamically if an object is moved or occluded.

This integrated approach mirrors human cognitive processes more closely than traditional robotic pipelines, which often separate perception and control into disconnected modules.

2.2 Multi‑Embodiment Learning and Generalization

One of Gemini Robotics’ most significant breakthroughs is its ability to learn across different robot embodiments. Models trained on one physical platform — such as the bi‑arm ALOHA robot — can generalize skills to other robotic forms like humanoids (e.g., Apptronik’s Apollo) and bi‑arm Franka manipulators without retraining.

This capacity for cross‑embodiment transfer learning dramatically accelerates the deployment of intelligent behaviors across a range of robotic hardware. Rather than customizing AI models for each robot, developers can train once and apply broadly, cutting down development time and cost.

2.3 Deep Spatial Understanding and Reasoning

Gemini Robotics‑ER 1.5 extends beyond simple object recognition to embodied reasoning — meaning robots can understand the geometry, spatial relationships, trajectories, and physical properties of their surroundings.

This capability enables robots to:

- Predict object movement or collision risk.

- Reason about relative positions in 3D space.

- Plan efficient and safe motion paths.

- Adjust strategies based on environmental changes.

The result is robots that can tackle more intricate tasks with contextual awareness and adaptive planning.

3. Technical Innovations Driving Gemini Robotics

3.1 Vision‑Language‑Action (VLA) Modelling

The VLA model at the heart of Gemini Robotics combines multimodal understanding with action specification. It processes images, text, audio, and video to generate action commands that a robot can execute.

This model operates at a level akin to semantic motor planning: it translates abstract goals into motor directives that are physically grounded, enabling robots to carry out tasks with fine motor skills and precision, such as folding paper or stacking boxes.

3.2 Embodied Reasoning With ER Models

Gemini Robotics‑ER 1.5 specializes in spatial and temporal reasoning within physical environments. It can answer questions like “Where should I place this item?” or “Is this object reachable?” by constructing internal representations of object geometry and scene layout.

This high‑level reasoning is crucial for long‑horizon tasks requiring planning, prediction, and adjustment — capabilities that move robots closer to human‑like flexibility in task execution.

3.3 Hybrid Execution Frameworks

To reconcile the computational demands of AI reasoning with real‑time physical control, Gemini Robotics models often operate with a hybrid architecture:

- Cloud‑based backbone for intensive reasoning and planning;

- On‑device action decoders for low‑latency control and responsiveness.

This design allows robots to maintain reactive behavior while still benefiting from deep cognitive capabilities.

4. Benchmarking and Measurable Performance Gains

Gemini Robotics models have achieved state‑of‑the‑art performance on a number of academic benchmarks related to embodied reasoning and spatial understanding, including tasks such as:

- Embodied Reasoning Question Answering (ERQA);

- Point‑Bench and RoboSpatial‑Pointing;

- Where2Place and RefSpatial.

These evaluations demonstrate that robots powered by Gemini Robotics can outperform prior models in interpreting tasks, understanding spatial contexts, and executing sequences of actions.

5. Practical Applications Across Industries

5.1 Warehouse and Logistics Automation

Gemini Robotics brings sophisticated autonomy to logistics robots that must navigate dynamically shifting environments, sort items, and respond to verbal or visual commands. Its capacity for planning and perception reduces the need for rigid infrastructure and extensive pre‑programming.

5.2 Service and Domestic Robotics

In home and service settings, Gemini‑enabled robots can interact naturally with humans, understanding everyday language and performing tasks like organizing items, tidying spaces, or assisting with chores — all with minimal explicit scripting.

5.3 Healthcare and Elderly Care Support

Robots equipped with Gemini AI could assist caregivers by understanding complex instructions and navigating cluttered environments safely — for example, retrieving medication, guiding mobility, or monitoring patient conditions.

6. Challenges and Limitations

Despite its breakthroughs, Gemini Robotics faces several hurdles before universal deployment:

- Data requirements: Training on diverse multimodal datasets remains resource‑intensive.

- Safety and robustness: Ensuring reliable behavior in uncontrolled environments is crucial, requiring extensive validation and fail‑safe mechanisms.

- Ethical and regulatory concerns: As robots gain higher autonomy, clear standards for accountability and safety must be established.

7. The Future of Generalist Robotics With Gemini AI

Gemini Robotics signifies a major leap toward generalist robots — machines that can flexibly adapt to new tasks and environments without extensive retraining or human intervention.

Key directions include:

- Lifelong learning and continual adaptation across environments and tasks.

- Enhanced human‑robot collaboration through natural language and multimodal interfaces.

- Cross‑platform standardization where a single model family can power diverse robotic hardware.

As research continues, Gemini models may become the default cognitive layer for embodied AI across consumer, industrial, and service robotics.

Conclusion: Gemini Robotics as a Core Technological Direction

Gemini Robotics encapsulates a transformative vision for robotics — one where robots understand the world like humans, interpret instructions intuitively, and translate knowledge into physical actions autonomously and flexibly. By unifying vision, language, and action into a coherent AI architecture, and pairing it with powerful embodied reasoning models, Google DeepMind has positioned Gemini Robotics at the heart of next‑generation robotics research and development.

While significant challenges remain — particularly around safety, deployment readiness, and general applicability — the breakthroughs achieved thus far paint a compelling picture of a future where robots are not just automated machines but intelligent, adaptive agents capable of meaningful collaboration in real‑world human environments.