Introduction: The Rise of Human-Robot Collaboration

As industrial and service robotics evolve, collaborative robots (cobots) are increasingly operating side by side with humans. Unlike traditional industrial robots confined to cages, cobots are designed to work in shared spaces, performing tasks ranging from assembly and logistics to healthcare assistance. This shift offers unprecedented efficiency, flexibility, and productivity, but it also raises critical safety challenges.

Ensuring safe collaboration requires a multi-layered approach that integrates:

- Safety regulations and standards – Providing frameworks to minimize risk

- Advanced sensor technologies – Enabling real-time awareness of humans and environments

- AI-driven decision-making – Allowing robots to predict, adapt, and respond safely

This article explores these three pillars in depth, emphasizing how they collectively safeguard collaborative scenarios. Through professional analysis and detailed examples, we examine current technologies, regulatory frameworks, control strategies, and future directions in human-robot collaboration safety.

1. Safety Regulations in Collaborative Robotics

1.1 Historical Context and the Evolution of Standards

Industrial robotics safety traditionally relied on physical separation, including cages, fences, and interlocks. The rise of cobots required a paradigm shift:

- Risk-based approaches replaced purely preventive ones

- Standards evolved to accommodate dynamic human–robot interactions

- Regulatory frameworks now emphasize design, control, and environmental safety

Key regulatory documents include:

- ISO 10218-1/2 – Safety requirements for industrial robots

- ISO/TS 15066 – Safety requirements for collaborative robot operations

- ANSI/RIA R15.06 – U.S. equivalent standards for industrial robot safety

These frameworks define maximum permissible forces, speeds, and joint limits, as well as risk assessment methodologies to ensure human safety.

1.2 Risk Assessment Methodologies

Collaborative scenarios require systematic risk assessment, typically conducted through:

- Hazard Identification: Determining potential sources of injury

- Risk Estimation: Evaluating likelihood and severity

- Risk Reduction Measures: Implementing physical, control, and procedural safeguards

This iterative process is central to compliance with ISO/TS 15066 and underpins design, programming, and deployment decisions.

1.3 Regulatory Influence on Design and Operation

Regulations influence multiple aspects of cobot design:

- Force-limiting actuators to prevent crushing injuries

- Safety-rated monitored stops and emergency stop systems

- Redundant sensors and control loops to detect abnormal situations

- Software certification processes for AI-enabled robots

These standards create a baseline for safe human-robot interaction while promoting innovation in sensor and AI technologies.

2. Sensor Technologies for Safety

2.1 Core Requirements for Sensing in Collaborative Environments

Effective human-robot collaboration depends on the robot’s ability to perceive and interpret its surroundings. Core requirements include:

- Detection Range and Coverage: Ability to monitor all human interaction zones

- Latency: Rapid sensing to allow timely interventions

- Accuracy and Reliability: Ensuring minimal false positives or negatives

These criteria guide the selection and integration of sensors in collaborative systems.

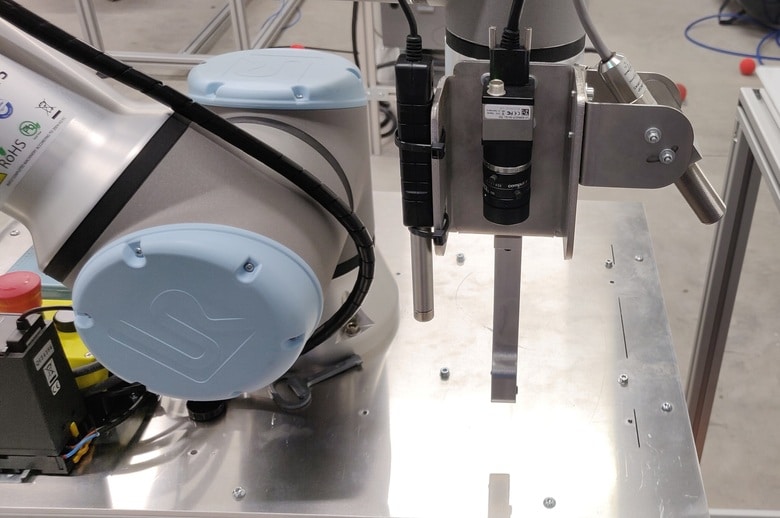

2.2 Vision-Based Sensors

Cameras and depth sensors form the backbone of perception in cobots:

- RGB Cameras: Enable human detection and pose estimation

- Depth Cameras (LiDAR/Time-of-Flight): Provide 3D environmental mapping and obstacle detection

- Stereo Vision Systems: Enhance accuracy in locating objects and humans in real time

Applications include workspace monitoring, gesture recognition, and collision avoidance.

2.3 Proximity and Force Sensors

- Capacitive or ultrasonic proximity sensors: Detect humans entering restricted zones

- Force/Torque Sensors: Measure interaction forces to prevent harmful contact

- Joint Torque Sensing: Enables torque-limiting and compliant motion

These sensors allow real-time intervention, such as slowing or stopping motion before impact.

2.4 Wearable and Environmental Sensors

- Wearable devices: Track human location and posture for predictive safety

- Floor sensors and pressure mats: Monitor workspace occupancy

- Environmental sensors: Detect obstacles, spills, or dynamic changes

Integration of multiple sensor modalities increases reliability through redundancy and cross-validation.

3. AI-Driven Decision-Making for Safe Interaction

3.1 The Need for AI in Collaborative Safety

Traditional reactive safety measures (stops, alarms) are insufficient in dynamic shared workspaces. AI enables predictive and adaptive behavior, allowing robots to anticipate human actions and adjust motion proactively.

3.2 Perception and Human Intent Recognition

AI algorithms process sensor data to:

- Identify humans and track their positions

- Recognize gestures, body posture, and movement patterns

- Predict trajectories and potential collisions

Methods include convolutional neural networks (CNNs) for visual recognition and recurrent neural networks (RNNs)/transformers for temporal prediction of human movement.

3.3 Motion Planning and Collision Avoidance

AI decision-making contributes to dynamic motion planning:

- Real-time trajectory adjustment: Adapting robot paths to human presence

- Velocity and force modulation: Slowing motion when humans approach

- Safe stopping or retreat maneuvers: Avoiding imminent collisions

These capabilities are often implemented through model predictive control (MPC) combined with learned behavior models.

3.4 Multi-Agent Coordination

In collaborative manufacturing, multiple robots may interact with humans simultaneously. AI enables:

- Task scheduling to reduce interference

- Workspace partitioning based on predicted human and robot trajectories

- Conflict resolution among multiple robots for seamless operation

This approach transforms collaborative environments into predictive, adaptive systems rather than reactive ones.

4. Integrating Regulations, Sensors, and AI

4.1 Multi-Layered Safety Architecture

Ensuring safety in collaborative environments requires layered protection, combining:

- Physical safeguards: Guards, compliant materials, energy-limiting actuators

- Sensor-based perception: Real-time monitoring of humans and objects

- AI decision-making: Predictive, adaptive control strategies

This hierarchy ensures redundancy and minimizes risk, aligning with ISO/TS 15066 guidelines.

4.2 Safety-Certified AI Systems

Integrating AI into safety-critical applications demands rigorous validation:

- Verification of perception algorithms under diverse conditions

- Simulation-based testing for collision scenarios

- Continuous monitoring of sensor integrity and algorithm performance

Certification standards for AI in industrial robotics are emerging, emphasizing reliability, explainability, and fail-safe design.

4.3 Real-World Implementation Examples

- Automotive assembly lines: Cobots adjust motion dynamically based on operator location

- Medical assistance robots: AI predicts staff movement and modulates force in patient handling

- Collaborative warehouses: Multi-robot systems dynamically re-route to avoid human workers

These implementations demonstrate the synergy of regulations, sensing, and AI.

5. Challenges in Ensuring Safety

5.1 Sensor Limitations

- Occlusions and blind spots in dynamic environments

- Environmental factors such as lighting or dust affecting vision sensors

- Sensor drift and calibration issues over time

5.2 AI Uncertainty

- Misclassification or prediction errors in human behavior

- Handling edge cases not seen in training data

- Balancing responsiveness with smooth task execution

5.3 Regulatory and Standardization Gaps

- Emerging AI-driven safety mechanisms may lack explicit certification guidelines

- Harmonization between regional standards (ISO, ANSI, IEC) is ongoing

- Continuous adaptation needed as collaborative robotics evolves

5.4 Multi-Modal Integration Complexity

Combining multiple sensor types with AI for predictive control requires:

- High-bandwidth real-time data processing

- Sophisticated sensor fusion algorithms

- Robust fail-safe mechanisms in case of system malfunction

6. Future Directions in Collaborative Safety

6.1 Predictive and Adaptive AI

Future systems will increasingly:

- Learn from ongoing human-robot interactions

- Adapt to individual worker behavior and ergonomics

- Optimize robot motion for safety and productivity simultaneously

6.2 Enhanced Sensing Technologies

- Next-generation 3D LiDAR with sub-centimeter resolution

- Smart textiles and wearable sensors for real-time human state monitoring

- Integrated multi-sensor platforms with self-diagnosis capabilities

6.3 Regulation Evolution

- Standards incorporating AI-based predictive safety measures

- Guidelines for validation, verification, and explainable AI in industrial robotics

- Harmonized global safety protocols for collaborative operations

6.4 Human-Centered Collaborative Design

- Ergonomic workspace layouts informed by AI simulation

- Adaptive interfaces for intuitive human-robot communication

- Continuous risk assessment based on operational data

7. Case Studies of Safe Collaborative Systems

7.1 Automotive Industry Cobots

- Implementation: AI-driven trajectory adjustment using LiDAR and vision

- Safety outcome: Reduced collisions and human fatigue, compliant with ISO/TS 15066

7.2 Healthcare Robotics

- Implementation: Force-sensing end-effectors with predictive AI for patient handling

- Safety outcome: Dynamic compliance ensures patient and staff safety

7.3 Smart Warehouses

- Implementation: Multi-robot fleet with AI scheduling and human tracking

- Safety outcome: Zero reported collisions in mixed human-robot operations

These examples highlight practical integration of regulations, sensors, and AI.

8. Best Practices for Implementing Collaborative Safety

- Conduct thorough risk assessments per ISO/TS 15066 guidelines

- Use multi-modal sensing to ensure robust human detection

- Employ AI for predictive motion and adaptive control

- Incorporate redundancy and fail-safe mechanisms

- Continuously monitor and evaluate system performance

- Engage in iterative design to balance productivity and safety

Conclusion: A Synergistic Approach to Human-Robot Collaboration Safety

Ensuring safety in collaborative environments is not a single-discipline problem. It requires the integration of:

- Regulations to define safety baselines and risk thresholds

- Sensors to perceive humans, objects, and the environment accurately

- AI decision-making to predict, adapt, and act proactively

When these elements work together, collaborative robots can operate efficiently, safely, and seamlessly alongside humans. As robotics continues to permeate industry, healthcare, and everyday life, the synergy of regulations, sensor technologies, and AI will define the next generation of safe human-robot interaction.