Introduction

As robotics transitions from industrial automation to service, healthcare, and personal-assistance applications, the ability of machines to perceive, interpret, and respond to physical interactions becomes increasingly critical. High-precision interaction requires not only advanced control algorithms but also physical perception systems capable of detecting forces, textures, temperature, and proximity.

Three interrelated technological domains are shaping the next generation of interactive robots:

- Tactile Sensing – enabling robots to “feel” objects, surfaces, and human touch.

- Soft and Flexible Robotic Skin – providing compliance, safety, and distributed sensing.

- Multimodal Sensing – integrating tactile, visual, auditory, and proprioceptive inputs to create holistic perception.

This article provides a comprehensive analysis of these technologies, their principles, current state-of-the-art implementations, applications, challenges, and future directions.

1. Tactile Sensing: Giving Robots the Sense of Touch

1.1 Principles of Tactile Sensing

Tactile sensors detect contact forces, pressure distribution, texture, and vibration. Core modalities include:

- Resistive Sensors – detect pressure through changes in electrical resistance.

- Capacitive Sensors – measure deformation by changes in capacitance.

- Piezoelectric Sensors – generate voltage in response to mechanical stress, sensitive to dynamic forces.

- Optical Tactile Sensors – use light modulation within soft materials to detect contact and force vectors.

1.2 Applications of Tactile Sensing

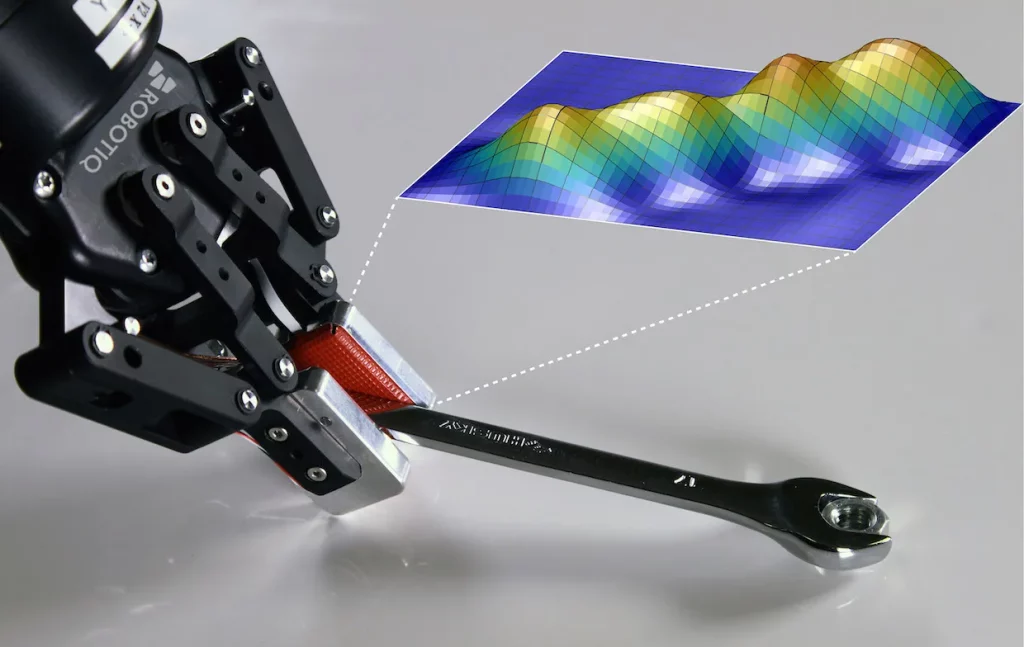

- Robotic Manipulation: Grippers can adapt grip strength, reduce slippage, and handle delicate objects.

- Medical Robotics: Surgical robots sense tissue compliance to avoid damage.

- Human-Robot Interaction (HRI): Detecting gentle human touch for safe collaboration.

1.3 State-of-the-Art Examples

- GelSight Sensors: Use a soft elastomer surface with embedded cameras to capture high-resolution contact maps.

- BioTac Sensors: Provide force, vibration, and temperature sensing to emulate human fingertips.

- Electronic Skin Arrays: Distributed arrays allow robots to map contact across a surface, supporting whole-body tactile perception.

1.4 Challenges in Tactile Sensing

- High sensor density increases data bandwidth and processing requirements.

- Achieving robustness under wear, contamination, and repeated use is critical for long-term deployment.

- Integrating tactile sensing with real-time control algorithms is nontrivial, especially in dynamic environments.

2. Soft and Flexible Robotic Skin

2.1 Advantages of Soft Robotic Skin

- Compliance: Reduces the risk of injury when robots interact with humans.

- Distributed Sensing: Enables pressure, stretch, and deformation measurement across large surfaces.

- Enhanced Dexterity: Flexible skin allows adaptation to irregular shapes during grasping or contact.

2.2 Materials and Technologies

- Elastomers: Silicone and polyurethane for deformable, biocompatible surfaces.

- Conductive Polymers: Allow electrical sensing across stretchable surfaces.

- Liquid Metal Networks: Embedded microchannels filled with gallium alloys for strain detection.

- Hydrogels: Soft, water-rich materials suitable for tactile and temperature sensing.

2.3 Applications of Soft Robotic Skin

- Prosthetics: Artificial limbs with tactile feedback for precise manipulation.

- Wearable Robotics: Exoskeletons equipped with soft sensors for safe force application.

- Service Robots: Cobots with compliant skin can safely interact in homes, hospitals, and public spaces.

2.4 Challenges

- Durability: Maintaining elasticity and sensing accuracy under repeated mechanical stress.

- Signal Processing: High-density, flexible sensor arrays produce complex, high-dimensional data requiring sophisticated algorithms.

- Integration: Combining soft skin with rigid components (actuators, frames) without sacrificing performance.

3. Multimodal Sensing: Combining Touch, Vision, and Proprioception

3.1 Concept of Multimodal Sensing

Robots operating in dynamic, unstructured environments benefit from combining multiple sensing modalities:

- Tactile Sensors – detect direct contact, pressure, and texture.

- Vision Systems – provide spatial awareness, object recognition, and motion estimation.

- Proprioception – internal measurement of joint angles, forces, and velocities.

- Auditory Sensors – support interaction in noisy or human-centric environments.

3.2 Advantages

- Robust Perception: Compensates for limitations in any single modality.

- Context-Aware Interaction: Enables adaptive behavior based on object properties and environment dynamics.

- Enhanced Learning: Multimodal data improves AI models for grasping, manipulation, and navigation.

3.3 State-of-the-Art Implementations

- Robotic Hands with Integrated Sensors: Vision-guided grasp combined with tactile feedback for dexterous manipulation.

- Soft Cobots: Use skin pressure arrays and vision to detect human intent and avoid collisions.

- Autonomous Mobile Robots: LiDAR, cameras, tactile bump sensors, and IMUs working together for highly precise navigation in crowded environments.

4. Applications of Tactile, Soft, and Multimodal Technologies

4.1 Industrial Automation

- Precision assembly and quality control in electronics and microfabrication.

- Detection of fragile or irregular objects using tactile and force feedback.

4.2 Healthcare and Medical Robotics

- Minimally invasive surgery using tactile-enabled end-effectors.

- Prosthetic devices that provide realistic tactile feedback to users.

4.3 Service and Collaborative Robotics

- Cobots operating safely in proximity to humans.

- Household robots capable of gentle object handling and adaptive interaction.

4.4 Human-Robot Interaction (HRI)

- Social robots detecting human touch for emotional response and engagement.

- Haptic feedback in VR/AR systems enhancing immersion and training applications.

5. Technical Challenges

5.1 Data Processing and AI Integration

- Tactile and multimodal sensors generate large, high-frequency datasets.

- AI and deep learning methods are essential for interpreting sensor data in real time.

5.2 Sensor Calibration and Reliability

- Maintaining accuracy under variable temperature, humidity, and mechanical stress.

- Cross-modal calibration is critical to avoid inconsistent perception.

5.3 Materials and Manufacturing

- Scaling production of flexible, durable sensor arrays remains technologically and economically challenging.

- Advanced microfabrication, 3D printing, and composite materials are essential to mass adoption.

6. Future Directions

6.1 Soft Robotic Surfaces with Embedded Intelligence

- Skin materials with onboard processing and localized AI for real-time tactile interpretation.

6.2 Haptic Perception Networks

- Distributed tactile networks enabling whole-body sensing for humanoid robots and exoskeletons.

6.3 Multimodal AI Learning

- Deep learning models combining tactile, visual, auditory, and proprioceptive data.

- Robots capable of self-supervised learning to adapt to new environments and tasks.

6.4 Bioinspired Design

- Emulating human skin and mechanoreceptors for high-resolution, sensitive interaction.

- Integration of soft tissue compliance and nerve-like sensing for dexterous manipulation.

6.5 Swarm and Cooperative Tactile Robotics

- Multiple robots equipped with tactile and multimodal sensors working collectively for complex tasks in manufacturing, healthcare, and exploration.

7. Strategic Implications for Industry

- Manufacturers: Adoption of tactile and multimodal technologies increases robot dexterity and reduces human oversight.

- Healthcare Providers: Enhanced interaction capabilities improve patient safety and procedure precision.

- Service Providers: Robots with tactile perception enable safe, adaptive, and socially aware operation.

- Investors and R&D: Companies developing advanced soft sensors, AI perception models, and modular robotic skin have significant market potential.

Conclusion

Tactile sensing, soft robotic skin, and multimodal sensors are fundamental enablers for the next generation of high-precision, interactive robots. By providing robots with the ability to feel, adapt, and respond intelligently, these technologies expand their application domains across industrial, medical, service, and personal robotics.

The convergence of advanced materials, high-resolution sensors, and AI-driven perception is transforming robots from rigid tools into perceptive, adaptive, and safe collaborators in human-centric environments. Overcoming challenges in durability, signal processing, and multimodal integration will be key to unlocking the full potential of high-precision robotic interaction, shaping the future of robotics across the globe.